Your AI prompts

shouldn't leak

your data.

Ki! sits between you and any LLM. Names, emails, account numbers, health data — scrubbed locally in under 20 ms before the prompt is sent. Originals restored in the response. Works with Claude, GPT, Ollama, and inside Cursor, Windsurf, and Claude Code via MCP.

No spam. No credit card. Cancel any time.

See it in action

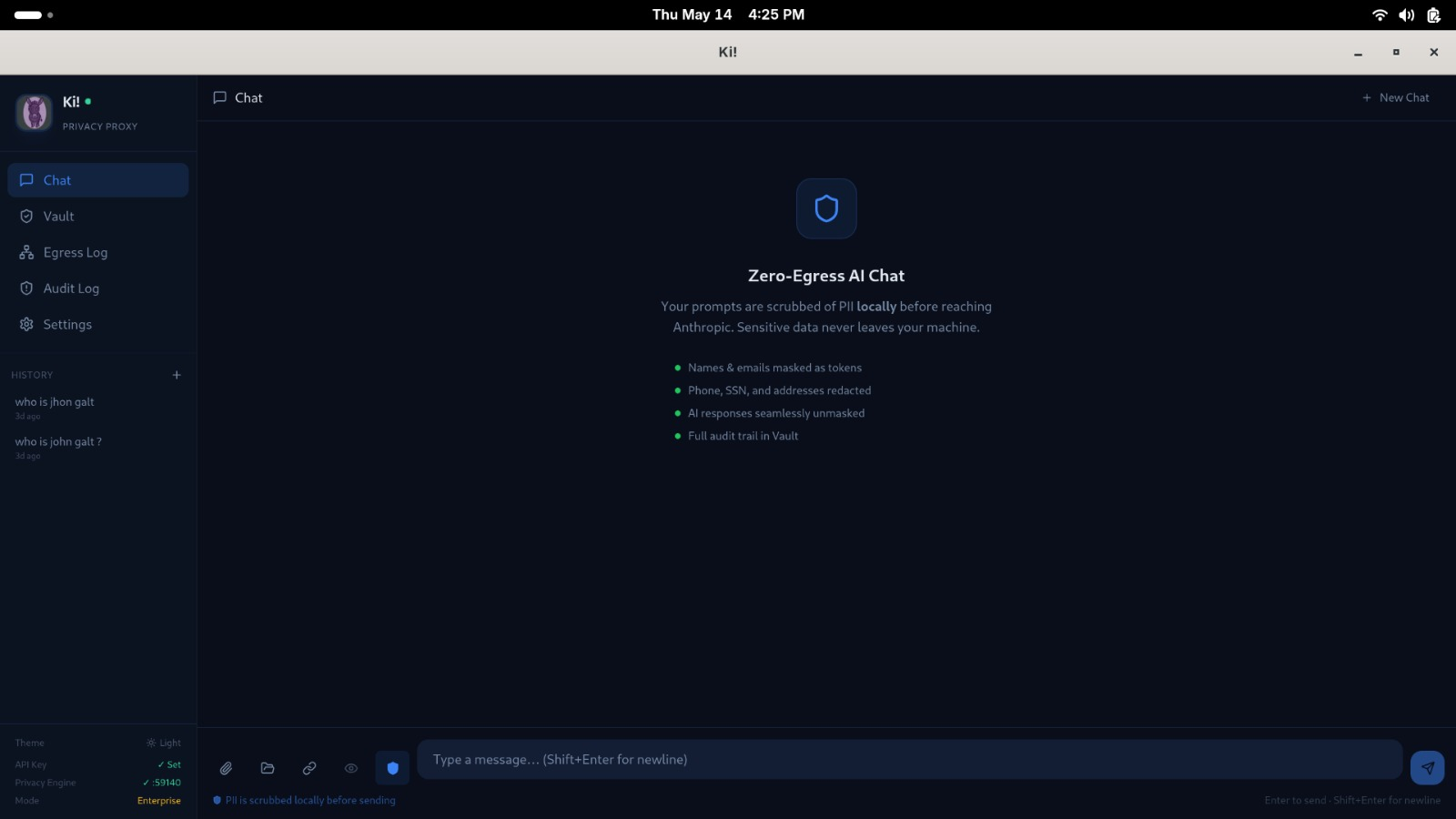

PII masked. Originals restored.

Privacy layer for any LLM

Claude, GPT-4, Ollama, Gemini — Ki! intercepts the prompt, scrubs sensitive data, and unmasked the reply. Change nothing in your workflow.

Works inside your AI IDE

Ki! ships as an MCP server. Cursor, Windsurf, Claude Code, and VS Code can call Ki! directly — every snippet you share is scrubbed before it reaches the model.

100% local, fully offline

The scrubbing engine runs entirely on your machine. No telemetry, no cloud dependency. Your API key lives in your OS keychain — never on disk.

MCP — Model Context Protocol

Privacy inside the tools you already use

Add Ki! as an MCP server and every code snippet, document, or prompt you share with the AI is automatically scrubbed before it reaches the model.

"ki-mcp": { "command": "ki-mcp" }

The pipeline

Three steps. Invisible to you.

- 01 You type normallyWrite your prompt as you always would — client names, account numbers, medical history. Ki! watches.

- 02 Ki! scrubs before sendCustom rules → regex → allowlist → local AI NER. Four passes in under 20 ms. The LLM receives [PERSON_0], [EMAIL_0] — never the real data.

- 03 Response restoredKi! reads the reply and swaps tokens back. You see the full, correct response. The LLM never touched the originals.

Shape Ki! from day one.

Get 3 months free.

Beta testers get early access, direct input on the roadmap, and 3 months of Sovereign tier — the full enterprise feature set — at no cost.

No spam. No credit card. Cancel any time.